- Home

- Weddings

- Portraits

- Journal

- Contact

- Alienskin exposure 7 license code

- Jake one snare jordan 2

- Php viewer android app

- Shankar mahadevan happy budday

- Resolume arena 5-1-4 crack

- Brush tool logic pro x 10-0-6

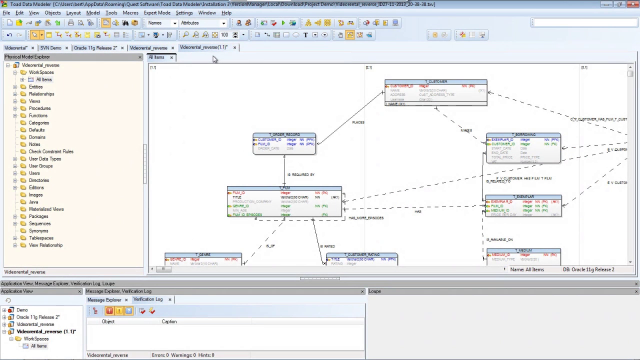

- Youtube toad data modeler inheritance

- Loic folloroux

- 3d earthquake enhanced edition

- United airline guitar

- Nani all movies

- Tu jaane na song movie

- Lean on 320 kbps

- Wondershare fantashow for mac download

- Csi miami season 5 episode 22

- Bus simulator games online 3d

- Sony sound forge pro 11 serial number

- Buds auto

- What men want near me

- Imovie 10-1-7 normalize

- George orwell 1984 john hurt

- Trucking news

- Nuance vocalizer chinese pre

- Tvf tripling season 2 watch online free 123movies

- Eminem without me gif

- Windows 13 change cursor color

- Bible black pc game yukiko creampie

- Hd movies hollywood in hindi dubbed download

#Youtube toad data modeler inheritance software#

Much effort is also spent on software belonging to the third group because the resulting applications directly serve end-user scientists as their primary "windows" to the data. For example, although some of the earliest work in DNA sequencing software focused on the problem of fragment assembly, this area is still active. In fact, they often evolve into separate, long-term research projects in their own right. In the typical scenario, a research community devotes much of its attention to developing software belonging to the first group mentioned above these applications are non-trivial in the sense that they usually involve significant algorithmic complexity. (This is true of some of the examples in Table Table1 1.) Moreover, certain tools may integrate parts of more than one of the classes. The categories do not imply any procedural ordering data that move through a processing pipeline are likely to be handled repeatedly by all three classes at various points. Each class is critical to the success of any large-scale project. Table Table1 1 lists these three classes and gives an example from each that is relevant to our own specific area of interest: DNA sequencing. This context reflects the biologist's "data → information → knowledge" paradigm.

Here, we use the word "software" to mean those computational tools that (1) process and analyze data, (2) organize and store data and provide structured data handling capability, and (3) support the reporting, editing, arrangement, and visualization of data. The growing trend toward automation continues to drive the urgent need for proper software support. Biologists have steadily been adopting the automated and flexible manufacturing paradigms already established in industry to increase production, as well as to reduce costs and errors. In particular, laboratory tasks that were once performed manually are now carried out by robotic fixtures. Such dramatic expansions in throughput have largely been enabled by engineering innovation, e.g., hardware advancements and automation. This scale-up has contributed to the rise of "big biology" projects of the type that could not have been realistically undertaken only a generation ago, e.g., the Human Genome Project. In a number of cases, the rate at which data can now be generated has increased by several orders of magnitude. Over the past several decades, many of the biomedical sciences have been transformed into what might be called "high-throughput" areas of study, e.g., DNA mapping and sequencing, gene expression, and proteomics. We describe the programming interface, as well as techniques for handling input/output, process control, and state transitions. Association between regular entities and events is managed via simple "many-to-many" relationships. Directives can be added or modified in an almost trivial fashion, i.e., without the need for schema modification or re-certification of applications. A layer above the event-oriented schema integrates events into a workflow by defining "processing directives", which act as automated project managers of items in the system. The design allows straightforward definition of a "processing pipeline" as a sequence of events, obviating the need for separate workflow management systems. Instead, we define distinct regular entity and event schemas, but fully integrate these via a standardized interface. Traditional approaches commingle event-oriented data with regular entity data in ad hoc ways. The model utilizes several abstraction techniques, focusing especially on the concepts of inheritance and meta-data. We describe a general modeling framework for laboratory data and its implementation as an information management system.